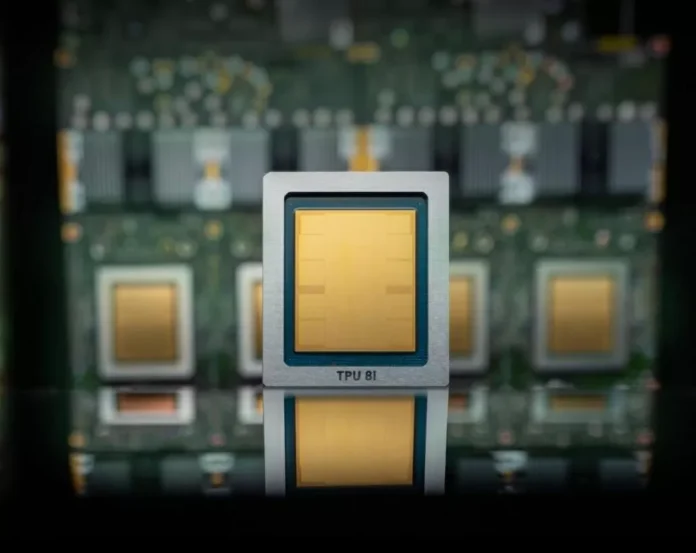

Google has always been at the forefront of innovation, constantly pushing the boundaries of technology to provide the best services to its users. And when it comes to artificial intelligence (AI), Google has been leading the way with its Tensor Processing Units (TPUs). These specialized chips are designed to handle the complex computations required for AI and machine learning tasks, making them an essential component in Google’s cloud infrastructure.

Recently, Google announced the release of its newest TPUs, the TPU v4, which boasts impressive improvements in speed and cost compared to its previous versions. This is great news for businesses and developers who rely on Google’s cloud services for their AI needs. However, what caught the attention of many was Google’s decision to continue using Nvidia’s GPUs in its cloud, at least for now.

This move may seem surprising, considering Google’s history of developing its own hardware for its services. But it actually makes perfect sense when we look at the bigger picture. Let’s take a closer look at why Google is still embracing Nvidia in its cloud, despite the release of its faster and cheaper TPUs.

First and foremost, it’s important to understand that Google’s TPUs and Nvidia’s GPUs serve different purposes. While TPUs are specifically designed for AI and machine learning tasks, Nvidia’s GPUs have a broader range of applications, including gaming, data analytics, and scientific research. This means that Google’s TPUs cannot completely replace Nvidia’s GPUs in its cloud infrastructure.

Moreover, Nvidia has been a long-standing partner of Google, providing GPUs for its cloud services since 2017. This partnership has been beneficial for both companies, with Google gaining access to Nvidia’s powerful GPUs and Nvidia expanding its reach in the cloud market. It’s a win-win situation for both parties, and it’s no surprise that Google is not ready to let go of this partnership just yet.

Another factor to consider is the current demand for AI and machine learning services. With the increasing adoption of AI in various industries, the demand for specialized hardware like TPUs is also on the rise. Google’s TPUs may be faster and cheaper, but they are also in high demand, and it will take time for Google to scale up its production to meet this demand. In the meantime, Nvidia’s GPUs can help fill the gap and ensure that Google’s cloud services continue to run smoothly.

But perhaps the most significant reason for Google’s decision to continue using Nvidia’s GPUs is the fact that they complement each other. Google’s TPUs and Nvidia’s GPUs can work together to provide a more comprehensive and efficient solution for AI and machine learning tasks. This is especially true for complex projects that require a combination of different hardware and software.

It’s also worth mentioning that Google is not the only company that is embracing Nvidia’s GPUs in its cloud. Other tech giants like Amazon and Microsoft also use Nvidia’s GPUs in their cloud services. This further highlights the importance and versatility of Nvidia’s GPUs in the cloud market.

So, what does the future hold for Google’s TPUs and Nvidia’s GPUs in the cloud? It’s difficult to say for sure, but one thing is certain – both companies will continue to play a significant role in the development and advancement of AI and machine learning. And as the demand for these technologies continues to grow, we can expect to see even more collaborations and partnerships between Google and Nvidia in the future.

In conclusion, Google’s newest TPUs may be faster and cheaper, but the company’s decision to continue using Nvidia’s GPUs in its cloud is a strategic move that benefits both parties. Google’s TPUs and Nvidia’s GPUs complement each other and provide a more comprehensive solution for AI and machine learning tasks. As the demand for these technologies continues to rise, we can expect to see even more exciting developments from Google and Nvidia in the future.